REG Design

Three Devices

The Global Consciousness Project uses three different random event generators (REG or RNG). These are the PEAR portable REG, the Mindsong Microreg, and the Orion RNG. All three use quantum-indeterminate electronic noise. They are designed for research applications and are widely used in laboratory experiments. They are subjected to calibration procedures based on large samples, typically a million or more trials, each the sum of 200 bits. In the GCP application, an unbiased mean is guaranteed by XOR logic. Although they have different fundamental noise sources, they all provide high-quality random sequences that are functionally equivalent.

PEAR type REG

We begin with the PEAR type REG, which is one of the devices developed for experimental research at the Princeton Engineering Anomalies Research (PEAR) laboratory. The PEAR program has used three generations of random event generators, with different primary sources of white noise, but important common features of design. The original benchmark

experiment used a commercial random source developed by Elgenco, Inc., the core of which is proprietary. Elgenco’s engineering staff describe the proprietary module as solid state junctions with precision pre-amplifiers,

implying processes that rely on quantum tunneling to produce an unpredictable, broad-spectrum white noise in the form of low-amplitude voltage fluctuations. The PEAR Portable REG, which was designed by John Bradish of the PEAR team, uses Johnson noise in resistors, which is so-called thermal noise

and is also a quantum level phenomenon that produces a well-behaved broad-spectrum voltage fluctuation. Three of these have been used in the EGG network. The other random sources use quantum tunneling in diodes or transistors.

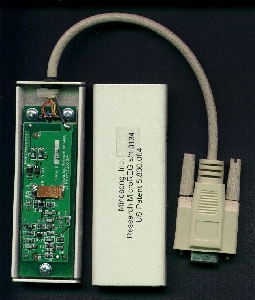

Mindsong MicroREG

The Mindsong MicroREG is a miniaturized REG device that was developed and prototyped at PEAR, is used in about half the nodes in the GCP network. The MicroREG also was designed by John Bradish, with a little help from Roger Nelson and others of the PEAR team. The design was provided to an independent company, Mindsong, Inc. for production and marketing. It uses a field effect transistor (FET) for the primary noise source, again relying on quantum tunneling, which provides completely uncorrelated fundamental events that compound to an unpredictable voltage fluctuation. The third device used by the GCP is the Orion, which is described below.

Orion RNG

The third device in the GCP network is the Orion RNG, designed by Dick Bierman and Joop Houtkooper in the Netherlands, as a project of their collaborative Foundation for Fundamental Research on Man and Matter (FREMM). The company that made the Orion is defunct as of 2010, so the following is historical documentation. The Orion is manufactured in Amsterdam and distributed by ICATT interactive media.

The following excerpt is drawn from the ICATT website’s full description of the device (now at archive.org):

Orion’s Random Number Generator consists of two independent analogue Zener diode based noise sources. Both signals are converted into random bitstreams, combined [using an XOR] and subsequently transmitted in the form of bytes to the RS-232 port of your computer. Special timing circuits ensure that crucial logical operations occur at moments that the device has stable signals. The baud rate is 9600. So the device is capable of supplying you with about 960random bytes or 7600 random bits per second Power is drawn from the RTS and TXD signal. (pins 4 and 2 of the D-25connector). In order to work properly the RTS signal should be high (5 volts or higher) and one should not send bytes to the device!

In all cases, the design begins with white noise, for example in the PEAR Portable REG, a flat spectrum +/- 1 dB from 1100 Hz to 30 kHz. A low end cutoff at 1000 Hz eliminates frequencies at and below the data-sampling rate. This filtering, together with appropriate amplification and clipping, produces an approximate square wave with unpredictable temporal variation. Sampling at a constant 1 kHz rate is typical, although special sources have been constructed allowing higher rates (up to 2 MHz). Analog and digital processes are completely isolated by alternating these operations to exclude contamination of the analog noise train by digital pulses.

The XOR

To eliminate biases of the mean that might arise from such environmental stresses as temperature change, electromagnetic fields, or component aging, an exclusive or (XOR) mask is applied to the digital data stream. This is either an alternating 1/0 pattern (this is applied by the GCP software for the Orion devices, and in hardware for the PEAR devices) or a more complex mask comprising an array of all bytes with equal occurrence of 1/0 (this is in firmware in the Mindsong devices). Both types of XOR exclude bias of the mean, in principle, and the latter also excludes all short-lag bit-to-bit and byte-to-byte autocorrelations. Finally, data for the GCP are recorded as trials

that are the sum of 200 bits drawn from the primary bit sequence. This sum across bits further mitigates any residual short-lag autocorrelations or other time-series predictability. The result is a data sequence of random numbers that conform to the appropriate theoretical binomial distribution and to its normal approximation.

The final output of the physical REG unit is a sequence of bytes presented to the computer’s serial port, which are then formed by the acquisition software into a sequence of trials (sums of 200 bits), generated at 1 per second. This composite trial value, based on an N of 200 bits has an expected mean of 100 and standard deviation of Sqrt(50). Calibrations on all of the devices show behavior that closely models theoretical expectations for mean, variance, skew and kurtosis. The calibration suite includes tests for runs, autocorrelation at raw and 50-trial block levels, conformance to the Arcsine distribution, and a number of other statistical criteria. See the full device description from Orion's website (via archive.org.)

REG Experiment Design

Given a sequence of trials with a well-defined expectation for the mean and standard deviation (100, 7.071), participants try to change the output according to pre-stated intentions. The situation is analogous to trying to get more heads

or more tails

while flipping an unbiased coin. The REG is in this sense a very sophisticated, high speed electronic coin-flipper,

connected to a computer for reliable data collection in controlled experiments. The computer also allows immediate computation of statistics, and feedback of various kinds including graphic displays of the accumulating deviations from what is expected for an undisturbed random process.

The basic design for laboratory experiments using the REG technology constitutes a final level of protection against artifactual sources of apparent effect. It is a tripolar

design, where participants generate data under three conditions of pre-specified intention, namely to achieve high (HI) or low (LO) means, or to generate baseline (BL) data. In addition to this primary variable, a number of secondary parameters are represented as options that can be explored. These include the identity of the individual operators (participants), including robust comparisons that are possible among a subset of prolific operators who do many replications of the experiment. A related, simpler variable is the operator’s gender, including operator pairs who may be of the same or opposite sex, and who may be bonded

pairs. Different sources for the data include the true random sources described earlier, and both hardware and algorithmic pseudorandom generators. Other parameters include the distance the operator is from the machine, up to thousands of miles, and analogous separations in time, up to several hours or a few days. The information density (bits per second) and the number of trials in runs have been varied, as have the instruction mode, feedback type, and the replication number or serial position. A number of publications giving details are available.

Notes

Use of the REG/RNG devices in the GCP network

In the EGG project, any of the three devices, PEAR, Orion, MicroREG, may be used. In the application, they are functionally indistinguishable, although the EGG software configuration must specify which device is in use. In addition to the technical details of the device construction and operation, an adequate picture of the overall project requires a description of the physical data-acquisition system, and definition of the terms used for the specialized equipment.

At each of a growing number (about 40 in late 2001; over 60 in 2005) of host sites around the world, one of these well-qualified sources of random bits (REG or RNG) is attached to a computer running custom software to collect data continuously at the rate of one 200-bit trial per second. This local system is referred to as an egg

, and the whole network has been dubbed the EGG,

standing for electrogaiagram,

because its design is reminiscent of an EEG for the earth. (Of course this is just an evocative name; we are recording statistical parameters, not electrical measures.) The egg software regularly sends time-stamped, checksum-qualified data packets (each containing 5 min of data) to a server in Princeton. We access official timeservers to synchronize all the eggs to the second, to optimize the detection of inter-egg correlations. Occasional drifts occur, but any mis-synchronization is expected to have a conservative influence in our standard analyses. The server runs a program called the basket

to manage the archival storage of the data.

Other programs on the server monitor the status of the network and do automatic analytical processing of the data. These programs and processing scripts are used to create up-to-date pages on the GCP Web site, providing public access to the complete history of the project’s results. The raw data are also made available for download by those interested in checking our analyses or conducting their own assessments of the data. Each day’s data are stored in a single file with a header that provides complete identifying information, followed by the trial outcomes (sums of 200 bits) for each egg and each second. With 40 eggs running, there are well over 3 million trials generated each day, and the complete database at the end of 2001 occupies approximately 3 gigabytes of storage in a highly compressed form.

A thorough analysis of the data has been done for the Analysis 2004 project. This includes detailed assessment of the performance of the individual devices and a description of the normalized random data used in our rigorous formal analysis.

If you need more information you can find good general references on theory and practice of random number generation on the web. (The reference I previously had went away.)

A version of this page with some links to background material is available at REG experimental design. A question that may be relevant for use of these well-designed devices is whether they might be affected by power line influences.